17 ROAR-Written Vocabulary Assessment Design

17.1 Construct Definition: Vocabulary Recognition in Context

ROAR-Written Vocabulary represents a digital adaptation of the Core Vocabulary Assessment (CVA), originally developed by Elfrieda H. Hiebert in collaboration with Midian Kurland (Elfrieda H. Hiebert 2024). The original CVA was grounded in a criterion-referenced framework emphasizing students’ ability to recognize the meanings of core academic vocabulary in sentence contexts. Hiebert developed the initial items and assessment format across four grade-level forms spanning two grade bands (Grades 3–4 and Grades 5–6), while Kurland conducted comprehensive quality control and led the initial validation work establishing the psychometric foundations of the original CVA. Initial pilot studies were conducted with 11,246 students across two phases (Phase 1: n = 7,901, fall; Phase 2: n = 3,345, spring), with students in each grade band randomly assigned to Form A or Form B. The ROAR implementation maintains this theoretical foundation while incorporating technological enhancements, empirical validation, and refinement within the broader ROAR assessment ecosystem.

ROAR-Written Vocabulary assesses core vocabulary knowledge through students’ ability to recognize synonymous relationships between academically important words and simpler, more familiar alternatives within meaningful sentence contexts. The construct definition emphasizes vocabulary recognition rather than vocabulary breadth—not simply how many words students know overall, but how reliably they can access the meanings of a specific, high-priority set of words they will encounter repeatedly across academic texts. By focusing on general academic vocabulary, the assessment narrows from vocabulary size to vocabulary depth within the words that matter most for academic reading: whether students have consolidated secure, accessible meanings for high-frequency academic words that appear consistently across subject areas and grade levels.

This assessment operationalizes vocabulary knowledge as the capacity to connect academically important words with semantically equivalent but lexically simpler terms, reflecting theoretical and empirical consensus that vocabulary supports reading comprehension through efficient meaning access rather than elaborate definitional knowledge or metalinguistic awareness (Perfetti and Stafura 2014; Galloway and Uccelli 2020). Within the core vocabulary, a particularly important subset consists of words that function as core analytic language—general-purpose terms that cut across subject areas to signal reasoning processes, logical relationships, and text structure (Uccelli 2023). By situating synonym recognition within sentence contexts that mirror authentic reading conditions, the assessment captures vocabulary knowledge as it operates during real reading, where meaning access must be rapid and reliable.

18 Word Selection Methodology

18.1 Core Vocabulary Identification

Target word selection for ROAR-Written Vocabulary drew from approximately 2,500 high-frequency morphological word families that constitute the foundation of academic literacy (Elfrieda H. Hiebert, Goodwin, and Cervetti 2018). Within this framework, the CVA design organizes word families into eight difficulty levels defined by U-function frequency bands, ranging from Level 1 (U = 100–251) through Level 8 (U = 10–13) (Elfrieda H. Hiebert 2024).

The original CVA was developed beginning with Level 4 (U = 45–65) as a proof of concept, targeting vocabulary that begins to appear with consistent density in school texts around fourth grade (Elfrieda H. Hiebert 2024). Prior to the ROAR calibration study, the item pool was expanded to include higher-frequency words (Zone 3 of Hiebert’s Word Zones framework) (Elfrieda H. Hiebert 2012) to improve measurement coverage for lower-performing students. The ROAR-Written Vocabulary item pool therefore spans approximately Levels 3–6, with the greatest concentration in Levels 4–5. These levels represent the general academic vocabulary most critical for reading success in upper elementary grades, a developmental period during which students transition from learning to read to reading to learn.

Within this item pool, variation in empirical item difficulty reflects the combined influence of multiple lexical properties—including U-function frequency, age of acquisition, morphological family size, concreteness, and dispersion—rather than CVA level membership alone.

18.2 Systematic Filtering Procedures

For each CVA level, candidate word selection began with all word families within the corresponding U-function band. For Level 4, the initial candidate pool consisted of 248 word families. A four-step filtering process was applied:

18.2.1 Step 1: Concreteness and word length screening

Words that were both highly concrete and very short were removed. Specifically, words scoring above 4.8 on concreteness norms (Brysbaert, Warriner, and Kuperman 2014) and containing fewer than five letters were excluded. This filter removed 44 words.

18.2.2 Step 2: Developmental calibration (earlier acquisition)

Words with acquisition trajectories indicating earlier-than-expected mastery were reassigned to higher-frequency CVA levels. This step relocated 87 words.

18.2.3 Step 3: Domain specificity screening

Words with narrow domain distribution were removed to ensure focus on general academic vocabulary. Dispersion values from the Educator’s Word Frequency Guide (Zeno et al. 1995) were used to exclude 18 words.

18.2.4 Step 4: Developmental calibration (later acquisition)

Words with later-than-expected acquisition trajectories were reassigned to lower-frequency CVA levels. This step relocated 12 words.

Following these steps, 78 words remained in the Level 4 pool. Of these, 40 were used in initial forms and 47 retained for future item development.

| Step | Filter Applied | Words Removed/Reassigned | Running Total |

|---|---|---|---|

| — | Initial candidate pool (U = 45–65) | — | 248 |

| 1 | Highly concrete (>4.8; (Brysbaert, Warriner, and Kuperman 2014) AND fewer than 5 letters | −44 | 204 |

| 2 | Reassigned to lower grade-level CVA levels (earlier-than-expected mastery) | −87 | 117 |

| 3 | Low dispersion (domain-specific; e.g., atoms, gravity) | −18 | 99 |

| 4 | Reassigned to higher grade-level CVA levels (later-than-expected mastery) | −12 | 87 |

| — | Words used in initial assessment forms | 40 | — |

| — | Reserve pool for additional item generation | 47 | — |

Note. Filtering steps follow the sequence described in (Elfrieda H. Hiebert 2024). Developmental trajectory reassignments in Steps 2 and 4 were determined using corpus-based estimates of grade-level word acquisition patterns.

18.3 Linguistic Categories

Following initial filtering, remaining words underwent strategic grouping procedures designed to create balanced representation across linguistic categories while maintaining psychometric equivalence. The selection process targeted systematic pairing and balancing. Words were matched in pairs on key lexical properties: U-function frequency, word length, concreteness (Brysbaert, Warriner, and Kuperman 2014), age of acquisition (Kuperman, Stadthagen-Gonzalez, and Brysbaert 2012), morphological family size, dispersion, and academic vocabulary ranking (Gardner and Davies 2014). This ensured equivalence across item sets and forms.

The balancing process additionally targeted specific distributions across critical word characteristics:

Part-of-speech distribution. A ratio of 9:6:3:2 for nouns, verbs, adjectives, and adverbs, respectively, was applied. This distribution reflects the relative importance of different word classes in academic texts (Elfrieda H. Hiebert, Goodwin, and Cervetti 2018) while avoiding over-representation of adjectives and adverbs, which contribute less to core meaning construction than nouns and verbs.

Morphological complexity representation. Words with varying morphological structures—including simple root words, words with common prefixes and suffixes, and words with multiple morphological components—were included. This approach captures the range of morphological complexity students encounter in academic vocabulary.

Semantic category representation. Marzano and Marzano’s supercluster system (Marzano and Marzano 1988) was used to ensure coverage across major conceptual categories relevant to academic learning. Words were paired within superclusters so that item sets represented a range of semantic relationships, including analysis, comparison, sequence, cause–effect relationships, and abstract concepts.

18.4 Item Development Process

18.4.1 Sentence Context Creation

Sentence contexts for ROAR-Written Vocabulary items were developed using corpus-informed procedures drawing from extensive databases of educational texts representative of materials students encounter in academic settings. The process prioritized authentic language patterns while controlling for construct-irrelevant variance.

Corpus-based development. Sentence contexts were developed using the TextBase (Elfrieda H. Hiebert 2025), a database of 10,000 texts spanning multiple genres, subject areas, and grade levels. Sentences were constructed to reflect authentic language patterns students encounter in academic reading, ensuring ecological validity without introducing excessive complexity that might confound vocabulary measurement.

Semantic constraint calibration. Sentence contexts were designed to provide sufficient semantic support for students with knowledge of the target word while avoiding excessive contextual cues that would allow correct responses through inference alone. This balance ensures that performance reflects vocabulary knowledge rather than general comprehension ability.

Syntactic complexity control. Sentences were designed to be accessible across the intended grade range while preserving natural language patterns. Structures were generally simple to moderately complex, with clear subject–verb relationships and minimal embedded clauses. Syntactic accessibility was evaluated through expert review rather than formal metrics.

18.4.2 Response Option Development

The four-option multiple-choice format employed systematic distractor development principles designed to create plausible but incorrect alternatives that provide meaningful information about student vocabulary knowledge.

Correct response selection. Simple, high-frequency synonyms with lower age of acquisition than target words were selected to represent the intended meaning within sentence contexts. Correct responses were drawn from earlier word zones within the core vocabulary framework (Elfrieda H. Hiebert 2012), ensuring familiarity while maintaining semantic accuracy.

Distractor construction. Distractors were drawn from three sources:

(a) words from earlier word zones (Elfrieda H. Hiebert 2012),

(b) grade-appropriate words identified through research and expert judgment, and

(c) semantically and syntactically plausible alternatives that fit the sentence context but differ in meaning from the target word.

Plausibility calibration. Distractors were designed to fit sentence context syntactically and broadly semantically while remaining incorrect synonyms. This ensures that distractors are attractive to students without target word knowledge but not misleading for those who possess it.

Sample Item

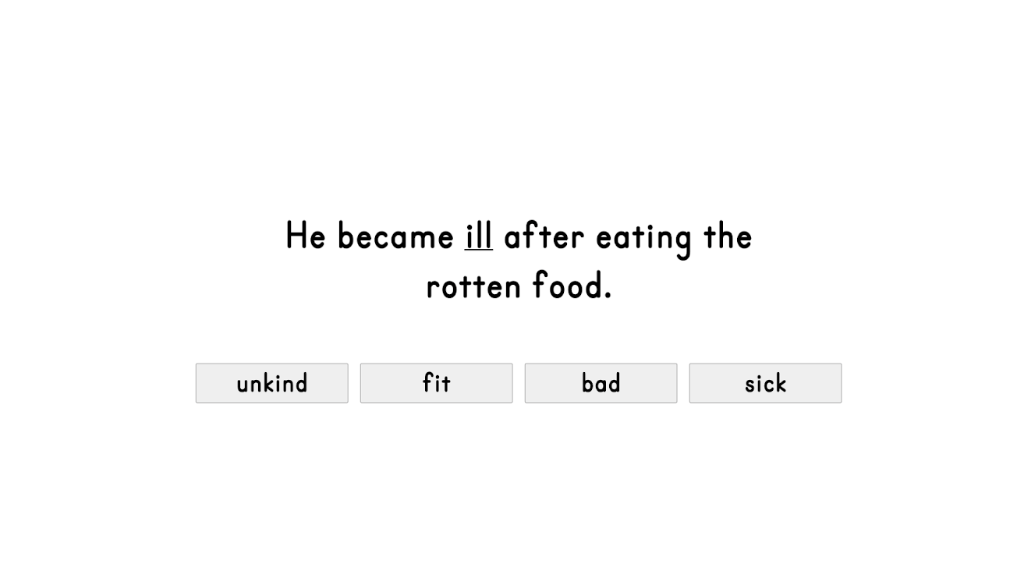

Figure 18.1 shows an example item from ROAR-Written Vocabulary, “He became ill after eating the rotten food.” Although all four choices could fit grammatically in the sentence, the correct answer, “sick”, is the closest synonym to the underlined word, “ill”.

18.5 Quality Assurance and Expert Review

All items underwent systematic review procedures to ensure construct alignment, developmental appropriateness, and freedom from bias or inappropriate content. Expert panels consisting of literacy researchers, educational practitioners, and measurement specialists evaluated items across multiple dimensions:

Construct alignment verification. Items were reviewed to ensure they assessed vocabulary knowledge as operationally defined, rather than related but distinct constructs such as reading comprehension, background knowledge, or reasoning ability. Reviewers examined whether successful performance required knowledge of the target word and whether alternative pathways to correct responses might compromise construct validity.

Developmental appropriateness evaluation. Items were evaluated for appropriate levels of challenge across intended grade ranges. Reviewers considered whether vocabulary, sentence complexity, and content remained accessible across the developmental span, and whether background knowledge demands exceeded reasonable expectations for elementary students.

Bias and sensitivity review. Items were examined for content that might introduce differential difficulty for students from diverse linguistic, cultural, or socioeconomic backgrounds. A multidisciplinary team—including former classroom teachers, literacy researchers, and measurement specialists—identified and eliminated items containing cultural references, colloquial expressions, or other features that could introduce systematic bias unrelated to vocabulary knowledge.

18.6 Assessment Structure and Format

18.6.1 Administration Format and Sequence

ROAR-Written Vocabulary employs a web-based administration format integrated with the broader ROAR assessment platform. Students access the assessment through standard web browsers on devices commonly used in educational settings, including desktop computers, laptops, tablets, and Chromebooks. The assessment begins with automated instructions delivered through narration, ensuring consistent administration procedures across contexts.

The assessment sequence includes brief practice items that familiarize students with the response format and confirm technical functionality prior to scored items. Practice items use vocabulary and sentence structures within students’ expected knowledge range, allowing focus on procedural understanding rather than content difficulty. Following practice, students proceed through the assessment independently, answering as many items as possible within a five-minute time limit designed to balance careful reading with efficient responding.

The current fixed-form administration is designed with future computer-adaptive testing (CAT) implementation in mind, which will improve efficiency by tailoring item selection to individual student ability levels.

18.6.2 Item Presentation and Response Collection

Each item presents a complete sentence containing a target vocabulary word highlighted in bold. The sentence appears above four response options arranged vertically, with clear visual separation between the sentence and response choices. Students select responses using mouse clicks, touchscreen input, or keyboard navigation, supporting diverse devices and accessibility needs.

Response time data are collected automatically for each item, providing additional information about processing efficiency and engagement.

18.6.3 Scoring and Data Quality Procedures

ROAR-Written Vocabulary employs dichotomous scoring: students receive full credit for correct responses and no credit for incorrect responses. This approach aligns with the assessment’s focus on vocabulary recognition—whether students can access the meaning of a core academic vocabulary word in context.

Student performance is summarized using percent correct, representing the proportion of items answered correctly out of those completed. This metric reflects the extent to which students have established reliable access to meanings of high-frequency academic vocabulary. Lower scores indicate that students have not yet consolidated meanings for core analytic language they will encounter repeatedly in academic reading, highlighting a key area for instructional support.

Norm-referenced scores, including percentile rankings and grade-level comparisons, will be available through the ROAR platform once sufficient data have been collected to support reliable norm development. Guidance will be provided to educators for interpreting these scores alongside assessment results.

The assessment structure supports efficient administration while maintaining the measurement precision necessary for educational decision-making. Integration with the ROAR platform enables automated scoring, seamless data collection, and immediate availability of results.

18.7 Quality Assurance and Assessment Refinement

ROAR-Written Vocabulary incorporates multiple quality assurance procedures to ensure reliable and fair measurement across diverse student populations. These procedures were implemented throughout development and continue to guide ongoing refinement. Bias and sensitivity review and differential item functioning (DIF) analyses were conducted to minimize bias and ensure equitable measurement across student subgroups. Results of these analyses are reported in the calibration section.

18.8 Ongoing Assessment Refinement

ROAR-Written Vocabulary is continuously refined using performance data collected across diverse student populations. Item performance monitoring evaluates how individual items function over time, identifying items with unexpected difficulty patterns, poor discrimination, or inconsistent performance across groups.

Regular review cycles use accumulated data to identify items requiring revision or replacement. This iterative process ensures the assessment remains valid, fair, and appropriately challenging. Feedback from educators and administrators further informs refinement, ensuring the assessment meets practical instructional needs while maintaining strong measurement properties.