4 Assessment Development Framework

4.1 Assessment Development Methodology of ROAR-Comp

The following section provides technical detail on our assessment development methodology. Teachers and school administrators implementing ROAR-Comp may find this information useful for understanding score interpretation; those seeking guidance on administration and use can proceed to the specific task of interest (e.g., Syntax, Morphology, Written Vocabulary, and Inference). For further information on our assessment development framework, see the Assessment Development Framework.

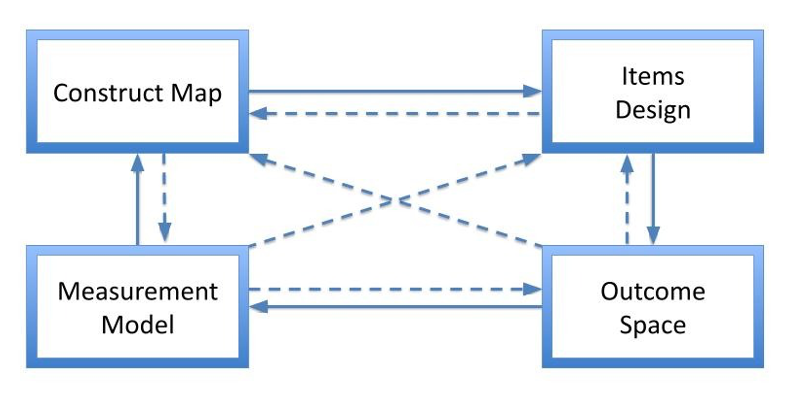

The ROAR-Comp Suite is developed using the BEAR Assessment System (BAS) (Wilson 2005, 2023)—a common framework for principled assessment design and validation (see Figure 4.1). The BAS organizes the development process into four interrelated components: (a) the construct map, (b) item design, (c) the outcome space, and (d) the measurement model/Wright Map.

The construct map defines each targeted construct (e.g., morphology, inference, vocabulary, syntax) along a developmental continuum, anchored by qualitatively distinct levels of performance. This map represents the hypothesized structure of the construct and serves as a blueprint for the development of items, distractors, and scoring rules.

Once items are piloted and scored, response data are calibrated using models from the Rasch family of models, placing both students and items on a common logit scale. The results are visualized on the Wright Map, which serves as an empirical realization of the construct map. This visualization provides a common framework for interpreting logit scores (and their transformation) anchored with banding of item parameters aligned with the hypothesized developmental continuum. The WrightMap also provides valuable feedback to test developers about necessary adjustments to items, scoring guides, and/or hypothesized construct structure, thereby supporting iterative refinement of the assessment.

4.2 Iterative Development Cycle

Each ROAR-Comp assessment follows a systematic four-cycle iterative process consistent with the BEAR Assessment System framework, ensuring that theoretical constructs are continually validated and refined through empirical evidence to enhance measurement precision and instructional utility.

The initial development cycle establishes foundational elements through comprehensive literature review and theoretical framework development, leading to initial construct map creation. This cycle includes pilot item creation designed to capture the full range of construct complexity, followed by expert panel review to ensure construct alignment and developmental appropriateness, and concludes with initial field testing using small samples to identify potential issues before large-scale implementation.

The refinement cycle focuses on improving assessment quality through systematic analysis of pilot data to identify items requiring modification or elimination. Item revision is guided by empirical performance data, ensuring that theoretical predictions align with actual student response patterns, while the construct map undergoes refinement based on observed difficulty progressions and student performance distributions.

The validation cycle represents the comprehensive empirical evaluation phase, involving large-scale field testing (in hundreds or thousands of students) to establish robust psychometric properties. Comprehensive psychometric analysis using Rasch modeling provides the foundation for final item selection based on strict quality criteria. The construct map receives empirical validation through establishment of cut scores based on actual student performance data, while external validity studies demonstrate the assessment’s relationships with established achievement measures.

The implementation preparation cycle focuses on developing the infrastructure necessary for operational use, including computer adaptive testing algorithm development to improve efficiency while maintaining measurement precision. Score reporting systems are designed to ensure that results are accessible and interpretable for educators, and administrator training materials are created to support standardized implementation across diverse educational contexts.

4.3 Ongoing Research and Development Across the ROAR-Comp Suite

Several initiatives are underway to enhance the ROAR-Comp assessments and expand their applications across different educational contexts. Computer-adaptive testing (CAT) implementation is being implemented for all ROAR-Comp measures to improve assessment efficiency while maintaining measurement precision. The current fixed-form approaches will be supplemented with adaptive algorithms that select items based on student response patterns, reducing administration time while providing more precise ability estimates by targeting items to each student’s ability level.

Expansion to additional grade levels is planned based on validation results and educator feedback. While current versions target specific grade bands, research is underway to develop versions appropriate for younger and older students, with modifications to content complexity and item design that maintain theoretical coherence while addressing developmental differences across age ranges.

Integration with learning management systems and existing assessment platforms is being developed to streamline administration and score reporting across all ROAR-Comp measures. Enhanced reporting features will provide more detailed diagnostic information for educators, including specific recommendations for instructional interventions based on student response patterns. These reports will synthesize performance across multiple ROAR-Comp assessments to provide comprehensive profiles of students’ reading comprehension strengths and needs.