23 ROAR-Inference Theoretical Background

23.1 The Simple View of Reading

Reading comprehension depends on two essential components: decoding (the ability to recognize written words) and linguistic comprehension (the ability to understand language meaning)—and both are necessary for accurate reading comprehension (Fethi and Józsa 2026; Hoover and Gough 1990; Verhoeven and Leeuwe 2012; Nordström, Fälth, and Danielsson 2025). A student might decode fluently but struggle to integrate multiple pieces of evidence into coherent explanations. Another student might decode more slowly but, once they recognize the words, demonstrate sophisticated meaning-making. Understanding both components provides a complete picture of reading comprehension development. ROAR-Inference is a measure of reading comprehension, specifically targeting the linguistic comprehension component of the Simple View of Reading, how students construct and evaluate coherent meaning when processing connected text.

23.2 How Students Make Meaning from Text

When students read, they constantly encounter gaps between what’s explicitly stated and what they need to understand (Biancarosa 2026; Blum et al. 2020; Graesser, Singer, and Trabasso 1994; Kendeou 2015; Rice and Wijekumar 2024; Trabasso 1980). A passage might tell us that “a coach leads her team to victory, wins the biggest prize, and then shares her prize with the team,” but it doesn’t explicitly state why she made this choice. Students must make an inference by constructing an explanation. The question is: How well do students construct and evaluate those explanations?

This is fundamentally a question of coherence—how well students’ explanations fit together, or overlap, with the information in the text and their world-knowledge (Carlson, Broek, and McMaster 2022; Harman 1980; Miller 2019; Read and Marcus-Newhall 1993; Sloman 1994; Thagard 1989; Trabasso, Suh, and Payton 1995). Consider a proficient reader encountering the coach passage. They spontaneously wonder “Why did she do this?” and integrate multiple textual clues (e.g., the team’s collaborative effort, their role in the victory, the shared nature of the prize) to construct an explanation: Because the coach recognized that her team’s contribution merited recognition. This reader has woven together pieces of the text into a coherent, well-supported explanation.

A struggling reader might notice the same surface details but draw a different conclusion: Because she won the biggest prize, treating the prize size as the explanation without connecting it to the deeper pattern of the team’s contribution. Or worse, a reader might construct an explanation based on general knowledge about human nature: Because she wanted to be famous, without checking whether the passage actually supports this interpretation.

These three responses—the coherent explanation, the partially-connected detail, and the text-disconnected plausible guess—reveal something important about how students construct meaning. The proficient reader checks whether explanations account for the available evidence in the text, whether they require assumptions not supported by the passage, and whether they hold up when compared to alternatives. The struggling readers skip these evaluative steps, either focusing on surface details or defaulting to background knowledge without grounding it in the text’s causal-network, that is, the relationship between ideas that support different types of causal connections (e.g., psychological causation, physical causation, enablement) (Carlson, Broek, and McMaster 2022; Davison, Biancarosa, et al. 2018; Trabasso 1980; Warren, Nicholas, and Trabasso 1979).

| Term | Definition |

| Inference | The process of generating information that is implied but not explicitly stated in text |

| Coherence | The degree to which elements of a text and mental representation connect, or overlap, logically and meaningfully |

| Local coherence | Explicit connections between sentences or ideas in text that form the inferential response |

| Global coherence | Explicit connections between sentences or ideas in text with one’s knowledge-base that form the inferential response, creating a relatively more macro mental representations |

| Explanatory coherence | Degree of overlap, or strength of connections, between the information in the passage, question stem, and explanation |

| Event-chain relations | Connections between narrative events including logical, informational, and evaluative relationships |

| Logical meaning-making | Understanding causality and motivation (why and how); connecting events through causal relationships and character motivations |

| Informational meaning-making | Tracking informational story elements and identifying which details are essential to understanding the narrative (who, what, where, when) |

| Evaluative meaning-making | Recognizing broader significance of events and understanding what those events reveal about lessons, morals, and thematic meaning; |

| Text-explicit information | Information stated directly in the text with grammatical links to questions |

| Text-implicit information | Information requiring connection of multiple text elements without grammatical links |

| Script-implicit information | Information requiring integration of text with background knowledge and cognitive schemas |

| Knowledge-base inferences | Inferences that draw on background knowledge to fill gaps not explicitly stated in the text, including goal inferences, causal-antecedent inferences, and referential inferences |

| Literal comprehension | Understanding information stated directly in text; distinguished from inferential comprehension, which requires constructing implied meaning |

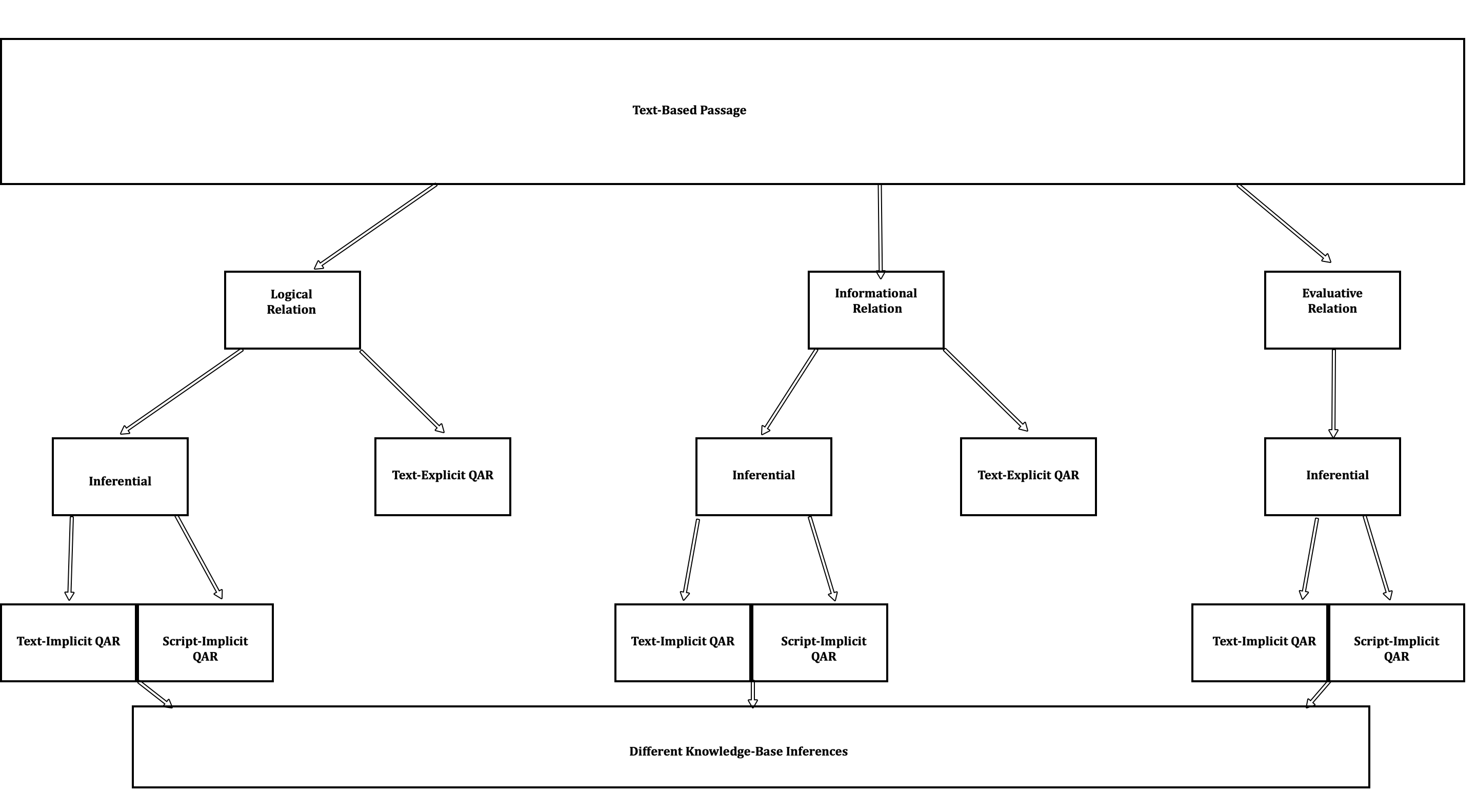

23.3 Three Types of Meaning-Making Relations

We are hardwired for narratives (Cohn et al. 2012; Mar 2004) and much of our life and memories can be situated as a narrative (Bruner 1991). As students read narratives — sequences of events and characters’ actions or states found across genres and text types, including fictional stories and nonfiction accounts such as biographies, historical narratives, and science based media — they use their world knowledge to construct explanations across different types of meaning-making relations (Blum et al. 2020; Kintsch 1988; Zhang, Prykanowski, and Koppenhaver 2023; Zwaan, Langston, and Graesser 1995). Understanding these types of relations helps teachers recognize where students struggle in their meaning-making processes. These relations can be relative to logical (i.e., causal) (Arslan and Kominsky 2026; Biancarosa 2026; Davison, Biancarosa, et al. 2018; Habermas and Silveira 2008; Hsu 2013; Ness-Maddox et al. 2023; Prat, Mason, and Just 2011; Broek 1990), informational (Haenggi, Kintsch, and Gernsbacher 1995; Pagkratidou, Galati, and Avraamides 2026; Pettijohn and Radvansky 2016; Rinck and Weber 2003; Zwaan 1996; Zwaan, Langston, and Graesser 1995), or evaluative relations (Blum et al. 2020, 2026; Jolin and Wilson 2022; Özyürek and Trabasso 1997). Taken together, they all stem from event-chain relations within text, as they capture the HOWs, WHYs, WHO, WHAT, WHERE, WHEN, APPRAISALS and LESSONS that make narrative so rich (Arslan and Kominsky 2026; Blum et al. 2026; Bodner et al. 2015; Warren, Nicholas, and Trabasso 1979).

Logical relations address causality and motivation: Why did a character act this way? How are events connected? When reading the coach example, students engage in logical meaning-making, asking why the coach shared her prize, and construct explanations based on causal relationships in the story. A proficient reader connects the coach’s action to her apparent values about recognizing others’ contributions, further accessing their schema (Anderson and Pearson 1984; Bower, Black, and Turner 1979; Mandler 2014; Schank and Abelson 2013), in this case a team schema, that is, a knowledge structure (e.g., generic knowledge structures like how teams typically behave and specific knowledge structures like memories related to what it means to be on a team) that represents ideas related to the idea of a team (Graesser, Singer, and Trabasso 1994).

Informational relations addresses informational story elements: Who is involved? What happened? Where did it happen? When did it happen? When reading the coach passage, students track that there’s a coach, a team, a prize, and multiple people involved. But informational meaning-making can go beyond simply noticing these elements—it can involve determining which of these informational relations are actually essential to understanding the story versus which are interesting but superfluous. Understanding that the team’s sacrifice matters is different from simply noting that a prize exists.

Evaluative relations addresses significance and deeper understanding: What does this reveal about the character? What’s the lesson or theme? In the coach example, evaluative meaning-making involves recognizing what the coach’s choice reveals about her character—that she values fairness and recognizes others’ contributions—and potentially understanding the broader message about teamwork or leadership.

A complete understanding of the coach passage requires all three: understanding why she acted (logical), which details matter (informational), and what it reveals about her values (evaluative).

23.4 Existing Approaches to Assessing Inference

Inference is widely recognized as a critical component of comprehension, and numerous approaches to assessment exist across modalities (text, image, video) (Basaraba et al. 2013; Blum et al. 2026, 2020; Davison, Biancarosa, et al. 2018; Kendeou et al. 2021; Medeiros et al. 2025; Petersen and Spencer 2023; Rochat, Lima, and Bressoux 2025). Traditional standardized measures typically assess inference through multiple-choice items or open-ended questions embedded in broader reading comprehension batteries (such as the Woodcock-Johnson Tests of Achievement, the Wechsler Individual Achievement Test (Schrank, F. A, McGrew, K. S, Woodcock, R.W 2001), or state standardized assessments). More specialized inference assessments include measures like the Multiple-Choice Online Causal Comprehension Assessment (MOCCA) (Biancarosa et al. 2019; Davison, Biancarosa, et al. 2018), which items focus specifically on causal inference in goal-driven narratives using a cloze-task format, the Minnesota Inference Assessment (Kendeou et al. 2021) which items focus on bridging and elaborative inferences when watching videos, the Comprehension, Understanding, Decoding, and Educational Development Assessment (CUBED-3: (Petersen and Spencer 2023) focuses on within-text and elaborative inferences, The Riddle Knowledge Inference Test (R-Kit: (Rochat, Lima, and Bressoux 2025) which items focus on location of action, time of action, action of the character, and objects used, and Integrative-Inferential Reasoning Assessment (Blum et al. 2020, 2026), a multimodal (Text+Comic and Text-only) assessment which items focus on motivational, evaluative, and meta-cognitive reasoning across comic+text and text-only modalities.

However, existing inference assessments share several limitations that constrain their utility. Many assessments focus narrowly on one type of inference (such as causal inferences or bridging inferences) without capturing the full breadth of meaning-making students must engage in across different types of relations. Additionally, existing measures often do not systematically examine different levels (e.g., local vs global) (Blum et al. 2020, 2026; Graesser, Singer, and Trabasso 1994; Language and Reading Research Consortium (LARRC) and Muijselaar 2018) and types of coherence (e.g., explanatory coherence)—the degree to which students’ explanations integrate evidence, account for multiple pieces of information, and hold up under scrutiny (Carlson, Broek, and McMaster 2022; Harman 1980; Miller 2019; Read and Marcus-Newhall 1993; Sloman 1994; Thagard 1989; Trabasso, Suh, and Payton 1995).

Often assessments treat distractor choices as simply incorrect options rather than leveraging them to reveal students’ reasoning processes (Biancarosa et al. 2019; Liu et al. 2019). Consequently, educators learn whether students answered correctly but gain limited insight into how students approached the task or what specific aspects of meaning-making they struggled with. Without these details, assessments cannot distinguish between a student who understands textual information but fails to integrate it into coherent meaning-making versus a student who over-relies on background knowledge without grounding it in textual evidence (Carlson, Broek, and McMaster 2022).

Formative assessments also have challenges that require our attention. 1) There is a trade off between group-administered reading measures that provide efficient screening and identification of students requiring support, yet often lack the instructionally actionable information needed to understand why students struggle and how to target intervention; 2) They often don’t have strong internal structure validity arguments (Kane 2001; Newton 2012; M. Wilson 2023) beyond accuracy and reliability (Israel and Duffy 2009).

When teachers administer reading assessments to identify students’ reading comprehension ability, they typically receive scores indicating overall performance levels—information sufficient for screening and benchmarking purposes; however, they often receive little information about why specific students struggle, what particular processing difficulties require targeted intervention, or how to match intervention intensity and focus to individual student needs. Conversely, assessments designed to provide rich diagnostic information about student reasoning processes usually demand substantial administration and scoring time, making frequent progress monitoring and universal screening impractical in busy school contexts. This efficiency-utility gap constrains educators’ ability to implement evidence-based instructional practices that require both reliable measurement and specific diagnostic information matched to individual student needs (Pellegrino, Chudowsky, and Glaser 2001; Snow and Mandinach 1999).

Like other inference assessments and instructional strategies that employ short passages with immediate inference measurement to isolate this comprehension process (Barth, Ankrum, and Newman Thomas 2024; Barth, Vaughn, and McCulley 2015; Rice and Wijekumar 2024; Rice et al. 2024; Rochat, Lima, and Bressoux 2025), ROAR-Inference uses carefully constructed short passages. This approach allows students to engage with a broader range of topics in a single testing session, reducing confounding effects from topic-specific or domain knowledge (Kate Cain et al. 2024; Carlson, Broek, and McMaster 2022; Garth-McCullough 2008; Johnston and Pearson 1982; R. Smith et al. 2021). With longer passages, students read fewer topics and their overall performance is more heavily influenced by familiarity with those particular topics.

23.5 Theoretical Foundation: Coherence and Event-Chain Relations

The construct measured by ROAR-Inference is grounded in different theories of coherence (Blum et al. 2020; Carlson, Broek, and McMaster 2022; Cevasco and Buralli 2023; Frith and Happé 1994; Graesser, Singer, and Trabasso 1994; Read and Marcus-Newhall 1993; Sloman 1994; Adams and Khoo 1993); E. Smith and Hancox (2001); (Thagard 1989; Trabasso, Suh, and Payton 1995), coupled with the event-chain framework (Arslan and Kominsky 2026; Rinck and Weber 2003; Warren, Nicholas, and Trabasso 1979). Together, these frameworks conceptualize reading comprehension as students’ ability to construct and evaluate coherent explanations across different types of meaning-making relations (logical, informational, evaluative).

Theories of Explanatory-Coherence posit that explanations gain strength when they account for multiple pieces of evidence (breadth), require fewer additional assumptions (simplicity), and can be supported by textual details (textual grounding) (Carlson, Broek, and McMaster 2022; Harman 1980; Miller 2019; Read and Marcus-Newhall 1993; Sloman 1994; Thagard 1989; Trabasso, Suh, and Payton 1995). The event-chain framework identifies three qualitatively different types of relations that organize meaning-making: logical relations explain causality and motivation, informational relations track informational event elements and their referential connections, and evaluative relations address broader significance and thematic meaning. By integrating these frameworks, ROAR-Inference operationalizes a comprehensive view of inferential reasoning that captures not just whether students answer correctly, but how they construct and evaluate meaning across multiple relations and among competing explanations.

ROAR-Inference was developed to address existing assessment gaps by providing a broad measure (assessing all three types of meaning-making rather than focusing narrowly on one inference type), theoretically grounded (explicitly operationalizing coherence evaluation across event-chain relations), and descriptively rich (using response options that reveal specific reasoning patterns and coherence levels rather than simply marking answers as correct or incorrect). This approach allows educators to understand the particular strengths and instructional needs of individual students in ways that traditional inference assessments do not support.

23.6 Connection to Reading Comprehension Development

ROAR-Inference measures students’ ability to engage in these three types of meaning-making while ensuring their reasoning is grounded in and supported by the text. As students develop as readers, they become increasingly sophisticated at:

Integrating multiple pieces of textual evidence

Checking whether explanations are supported by what the text actually says (implicitly and explicitly)

Comparing competing explanations and evaluating which is better supported

These abilities are essential for reading success. Students who struggle with coherent meaning-making may understand individual sentences but fail to construct an integrated understanding of a passage. Poor comprehenders often maintain the surface aspects of sentences they read but fail to retain the gist or meaning (Oakhill and Cain 2012). They can repeat back individual sentences accurately, yet struggle to integrate information across sentences to build a coherent picture—particularly when inferences are required (K. Cain et al. 2001; Kate Cain and Oakhill 1999; Carlson, Broek, and McMaster 2022). This difficulty with automatic integration can create significant bottlenecks in later grades when texts demand more sophisticated meaning-making (Barth, Ankrum, and Newman Thomas 2024; Barth, Vaughn, and McCulley 2015; K. Cain et al. 2001; Kate Cain and Oakhill 1999; Perfetti and Stafura 2014; Rice and Wijekumar 2024; Rice et al. 2024).

ROAR-Inference assesses these meaning-making abilities across the three types of relations (logical, informational, evaluative) and varying levels of cognitive demand, providing educators with information about how students construct and evaluate meaning from text. Like other ROAR measures, ROAR-Inference is designed to be a quick, efficient and precise measure. Rather than longer passages, ROAR-Inference uses carefully constructed passages that are 2-6 sentences long, with the mean sentence length of 3.6 – (1/34 stories had 6 sentences, 5/34 stories had 5 sentences, 8/34 stories had 4 sentences, 17/34 stories had 3 sentences, and 2/34 stories had 2 sentences). This length reduces working memory demands that can confound inference assessment (Yuill, Oakhill, and Parkin 1989) and minimizes reliance on background knowledge or text memory (Carlson, Broek, and McMaster 2022; Kate Cain et al. 2024; E. Wilson et al. 2024), allowing for a relatively more precise measure of inference-making ability. By using shorter passages, students can engage with a broader range of topics in a single testing session, reducing confounding effects from topic-specific or domain knowledge (Kate Cain et al. 2024; Carlson, Broek, and McMaster 2022; Garth-McCullough 2008; Johnston and Pearson 1982; R. Smith et al. 2021). With longer passages, students read fewer topics, and their overall performance is more heavily influenced by familiarity with those particular topics.